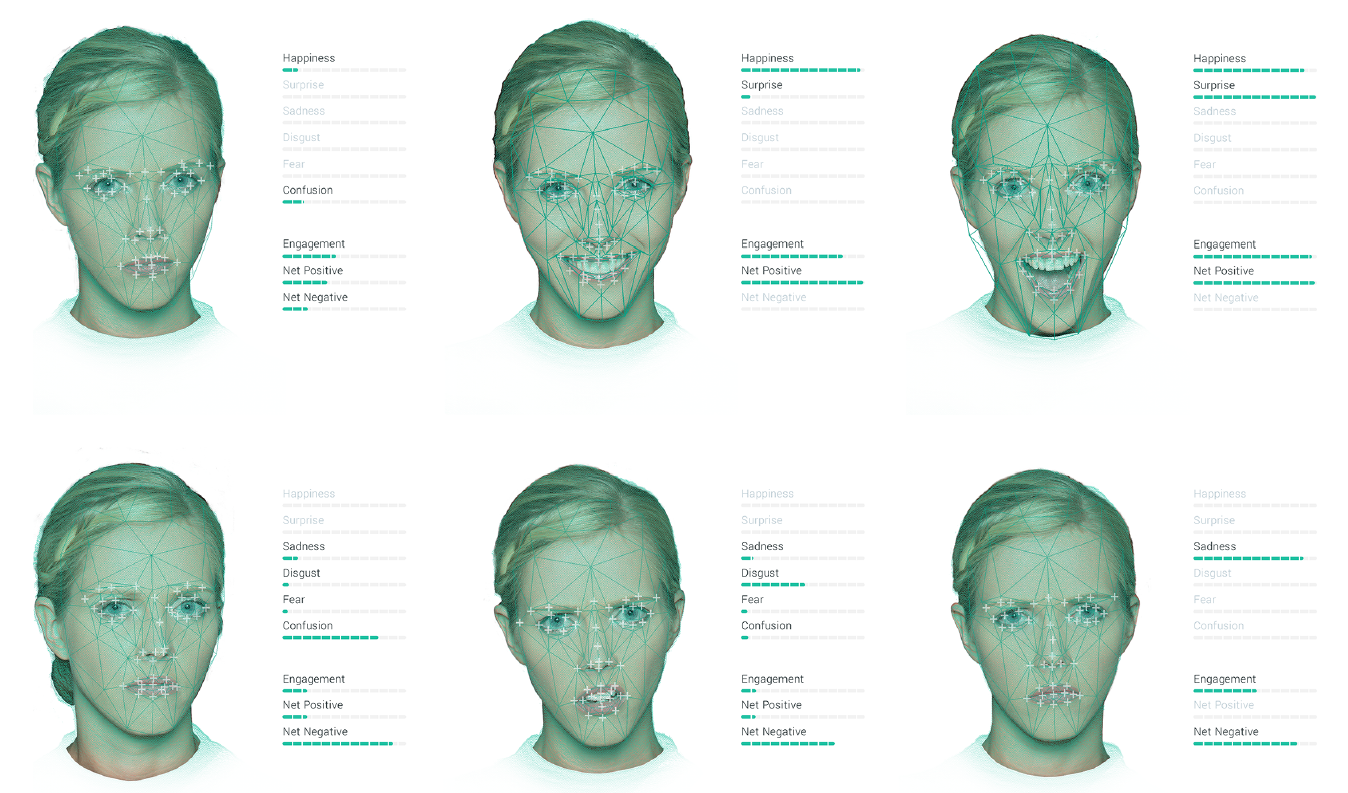

Long standing research has established that there are seven basic emotions that are universally recognized by humans. This is true regardless of age, gender, or ethnicity of both the subject and the observer. These are happiness, surprise, anger, sadness, disgust, fear, and contempt.

Our platform measures happiness, surprise, sadness, disgust, fear, and contempt, but we also measure confusion instead of anger. Confusion is not one of the seven basic emotions, but having analyzed thousands of ads, we realized that anger was almost never elicited. Instead, we were seeing a similar expression - the same movements of the eyes and the eyebrows as anger, but different movements around the mouth - confusion. People rarely get angry at ads, but they do often get confused, so confusion replaced anger on our roster.

- Happiness: Happiness is one of the basic emotions, and synonymous with a smile, indicating the cheeks raising and the corners of the mouth pulling up, respectively.

- Surprise: One of the basic emotions, and synonymous with a 'shocked' expression - raised eyebrows, eyes wide, mouth open.

- Confusion: Confusion is synonymous with a lowering of the brows. Confusion is not one of the basic emotions but is a similar expression to Anger and displayed at much higher levels in response to advertising.

- Sadness: One of the basic emotions, and synonymous with the classic downturned mouth.

- Disgust: One of the basic emotions, and synonymous with an expression of distaste.

- Scared: One of the basic emotions, and synonymous with fear.

- Contempt: Contempt is synonymous with a tightened and raised lip corner on one side of the face. It is a feeling of dislike and superiority over another.

Additionally, we measure a series of proprietary metrics derived from those emotions and our own research, which can be used in conjunction with the basic emotions to gain deeper insights into the videos we test

- Engagement: When a participant has an expressive reaction to a stimulus, they are said to be ‘emotionally engaged’. It represents the % of participants who showed any emotional reaction.

- Valence: A proprietary metric to demonstrate how positive or negative a reaction is. It is essentially Positive emotions minus Negative emotions.

- Negativity: The percentage of people showing an emotion classified as negative.